YouTube video content creators often refer to themselves as “hybrid shooters” because they use cameras that combine still photography and videography in the same equipment. The term has been mis-used for a long time, and I feel it necessary to set the record straight.

This is especially true today because in this age of multi-channel content creation, I feel that a hybrid shooter is really someone who uses multiple devices to create content, not just one device built with multiple capabilities that is repurposed strictly for video. But first, let’s explore a bit of history.

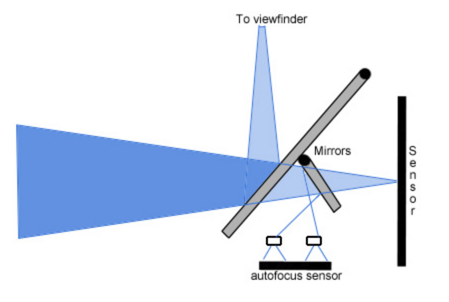

The term hybrid actually came into being in reference to the first mirrorless stills camera. It had nothing to do with video. It actually referred to the hybrid function of providing both a viewfinder and a live-view back panel to still photographers. In traditional DSLR photography, a mirror redirects some of the incoming light to a separate optical viewfinder system, while allowing the remaining light to be captured by the sensor.

This optical viewfinder provided the ability to “preview” the shot prior to capture. But at least in my last DSLR, the preview often presented a wider field of view than the final sensor image capture, meaning that elements close to the edge of the frame were sometimes cut off in the actual image capture by the sensor. A bit of an issue for close-cropped, tight shots. But you learned to adjust. The optical viewfinder provided an estimate of the shot.

The other big challenge with optical systems is that the light level and exposure of the image in the optical viewfinder in no way matched the final exposed image captured by the sensor. So if you had a high ISO setting, a slow shutter speed, or a stopped down aperture, you really had no idea what the final image would look like. And if you were using flash, an optical viewfinder was even more difficult to use, since your scene would be dark until such time as the flash fired.

The last DSLR’s included live histograms in the live view display, at least providing some information about exposure prior to taking the shot. Older DSLR’s only displayed a histogram after the shot was taken. So much like film photography, you really had no idea of the quality of the shot until it was taken.

Not so with “hybrid” mirrorless cameras that presented and continue to present the same image to both displays – viewfinder and rear LCD. The first of these was produced by Panasonic around 2005. In these cameras, the view is what the sensor sees, with both displays able to access the sensor readout. And in today’s modern mirrorless cameras, you can also simulate what the final shot will look like based on the settings you select. Those digital processors are very smart. Some things can’t be simulated, like slow shutter speeds used with moving subjects, but overall, you get a great estimate of the final shot.

But then someone had the idea of including video capability in a stills camera, and Canon led the way by including video capability in the 5D Mk II camera in 2008. Sensor and processor and storage technologies had advanced far enough to make it possible to include it. Remember that video is really just super high rate still photography, 24, 30, 60 or more still frames per second. These are combined to produce what our eyes perceive as smooth motion. The technology to capture that many frames that quickly was finally available. Compared to the very first known recorded photograph, which apparently took 12 hours for a single image, 60 frames a second is pretty darned fast!

It seems like a no-brainer today to combine video and still photography in a single device, but they are very different creatures. With movement, light levels are changing constantly, and camera systems need to adjust exposure quickly. Opening and closing the shutter fast enough to record movement also requires care, with specific settings for specific types of movements – smooth with blur vs. smooth with no blur, etc. And then there’s the whole issue of recording sound too, and matching it precisely to the high speed still frame that it belongs to. And to actually record the footage, you need specialized ways to compress the information for maximum efficiency on storage media. There are frame rates and codecs and LUTs and audio engineering – all of which have little to no application to still photography.

In a way, it makes sense to have both still and video capture on one device. Both record a visual experience. Certainly, in our age of convenience, we demand this. Our cellphones attest to it. But in another way, it really makes no sense. There were so many issues with the Canon EOS R5 and its video capabilities when released in 2020 that Canon eventually released the Canon EOS R5C, which includes two separate control systems – one for still photography and one for videography. There are two different sets of menus, different setting choices and different display layouts, all in one camera. You really have two cameras in one.

I personally don’t know that many people who use their camera equally for stills and for video. I don’t. I have the Canon EOS R5, but don’t shoot video with it, not even short clips. It is too big and bulky to hold while moving around and the features and functions I need for video are best found elsewhere. I have a Sony vlogging camera, a DJI Osmo Pocket for capturing footage on the move, and a Go Pro Hero 10 for hands-free video. And don’t forget the drone for those overhead context shots. Most of the people I know personally and most who I follow also don’t use their cameras equally for both stills and video.

So it seems that the “demand” for both still and video capability in one device might be a bit of a myth. I wish it were possible to build a modular camera, rather than a hybrid camera. You could pick and choose the features/functions you wanted. Just slap on a new module and you have fast action still photography one day and maybe cinematic video the next. Buy a new module when you need it – or don’t. Those would be true hybrid cameras. Maybe someday.